Color Pipeline specialist Alex Fry talks the benefits of ACES for VFX.

Why does ACES matter to VFX? From a certain perspective it doesn’t, we've already been working this way for years right? Everyone has a flow chart somewhere in their facility, boxes pointing into other boxes, arrows representing this or that. But the reality has been that most people in the building have subtly different ideas about what each box and arrow mean, and how important they are. Subtle terminology differences, different assumptions about what those terms mean. And that's before you even think about talking to someone from another facility about those very same concepts.

Breaking down statements that sound simple, like "we have a show LUT" into something meaningful has previously been a very difficult exercise, full of conference calls, on bad speakerphones, with lots of muffled yet agitated "We're in Linear damn it! LINEAR!".

“ACES GIVES US A COMMON LANGUAGE TO WORK WITH, AND A COMMON SET OF WELL-DEFINED HAND-OFF POINTS BETWEEN THE DIFFERENT PARTS OF THE SYSTEM”

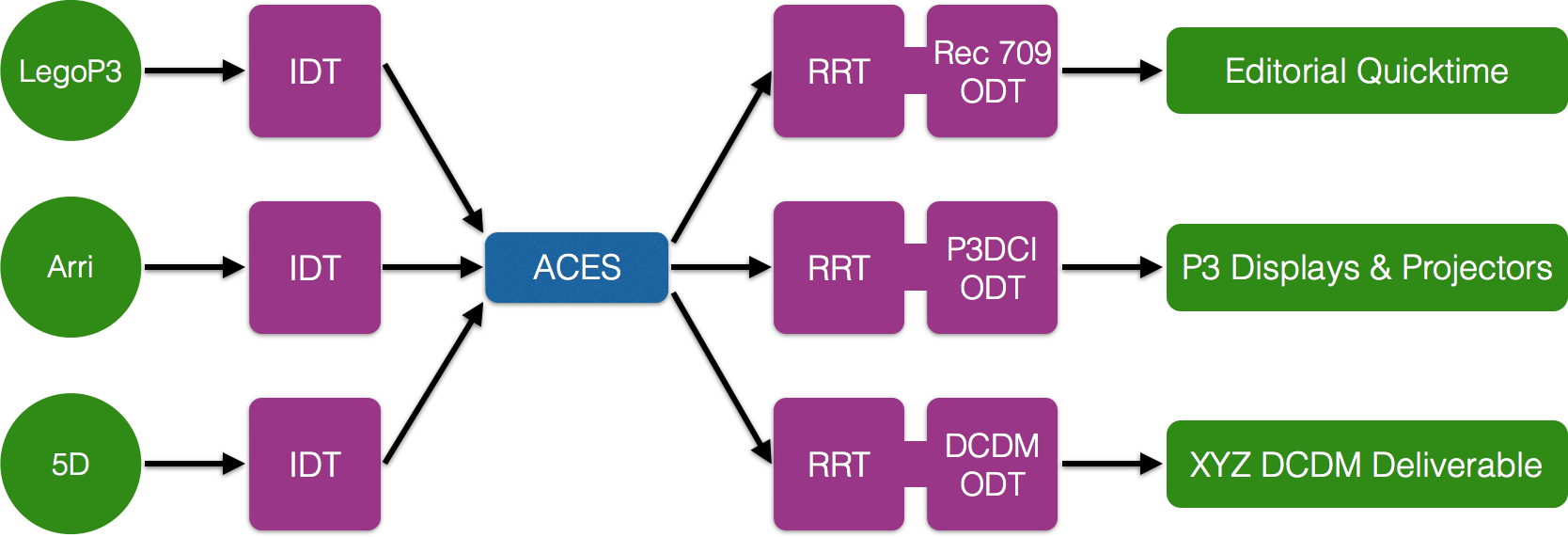

One of the great things ACES gives us is a common language to work with, and a common set of well-defined hand-off points between the different parts of the system. We can talk about, analyze and debug parts of our ingest pipeline in total isolation from our display pipeline. That may sound like an obvious distinction, but in the world of "we have a show LUT" these issues are hopelessly entangled.

The “building block” flexibility of ACES spans not just individual people, but teams and companies. I can talk to someone on set about a custom Input Transform, pass it into Nuke, grade in a Baselight, finish in a Flame, and archive it for an as yet un-invented display. It doesn’t matter that I have to pass through at least 3 different colour workflow implementation systems, across multiple platforms, at different points in time. The common terminology and standardized hand-off points make it easy to do, and easy to describe.

“ACES PROVIDES A ROBUST, HIGH DYNAMIC RANGE, WIDE GAMUT, - DISPLAY TRANSFORM.”

The other important feature of ACES is that it provides a robust, well tested, high dynamic range, wide gamut, display transform. In general, most display transforms used “in the wild” are either simple 1D curves, or complex show specific 3D transforms created using the time-honored "twiddle the knobs on the DI system" method. Each have their own issues. Simple 1D transforms, like Nuke's default Rec709 OETF are well understood, and invertible, but begin to fall apart as colour and intensity get high. The seemingly simple issue of clipping once values move above 1.0 leads to all sorts of subtle artistic issues: Why does that atmos layer look terrible? Because there isn’t enough light filtering through the scene. Why is that? Because the sky has been graded down. Why has the sky been graded down? Because it was clipping. Why was it clipping? Because we were using a too-simple 0->1 transform on a high dynamic range floating point image. You can move to a "linear to light workflow", but it's wasted effort if the "light" that you're "linear to" is the light coming out of the display, not the light in front of the camera lens. And realistically you can only work in a truly "linear to light in the scene" (scene linear) way, if you have a proper high dynamic range-capable display transform. The ACES Display Transforms give you that.

“IF YOU BUILD A UNIVERSE WHERE THE SUN OUTSIDE, AND A CHARACTER INSIDE BOTH HAVE TO LIVE IN THE NARROW BAND BETWEEN 0->1, NOTHING WILL RESPOND REALISTICALLY. “

The same issue arises even when you're working in a purely CG world. With modern physically-based renderers, realism suffers if things aren't set up in a way that mirrors the real world. Therefore, no matter how physically accurate your renderer is, if you build a universe where the sun outside, and a character inside both have to live in the narrow band between 0->1, nothing will respond realistically. Lights won't bounce enough, things won't flare enough, ‘pings’ won't pop enough. By throwing enough talent and artistry at this problem, good results can be achieved. But you're really stacking the deck against your crew if you don't give them a good, solid, well-tested display transform to work through.

"HOW DO I TREAT THIS RANDOM JPG FROM THE DIRECTOR'S IPHONE AS A HIGH DYNAMIC RANGE SKY FOR THE BACKGROUND OF THIS SHOT? ACES CAN HELP.”

As well as dealing with the classical colour management problems, like "how do I get this high end cinema camera to look good on this high end DCI projector?", ACES's collection of well-defined invertable transforms also allows for all sorts of weird and wonderful solutions to questions like "how do I treat this random jpg from the director's iPhone as a high dynamic range sky for the background of this shot?" Or "how do I create a temporary look transform that matches this weird CDL from set that was done in a different space, and targets a completely different display". These are the sort of things that you never want to have to do, yet come up all the time in real production.

ACES is at it's best when you're running things clean and by the book, but it's flexible enough to survive contact with reality. And isn't that what we really want?

Alex Fry is a compositing and colour pipeline supervisor who has spent the last 15 years making boring pictures awesome and dowdy pixels pretty at Animal Logic, Dr.D Studios, Rising Sun Pictures, Weta Digital and Fin Design + Effects. Alex was the driving force behind ACES on “The Lego Movie” at Animal Logic, and has gone on to implement ACES on a variety of projects ranging from television commercials to summer blockbusters

For more info on how Alex has used ACES, see his presentation from Siggraph 2015.